I wanted to do some REST client stuff in PHP with cURL today. It has the neat CURLOPT_PINNEDPUBLICKEY option that one can use to set the certificate fingerprint. Unfortunately its a bit cumbersome, as one needs to enter the fingerprint in base64, instead of just the format the browser displays if you look at the cert directly (e.g.: 529728d7c43746c0bb02ac4a4c3bffb7028ac1591ecb08b6a0721a660aa1d3ce). Luckily we can just get the correct fingerprint format using this one command: openssl x509 -pubkey < cert.crt | openssl pkey -pubin -outform der | openssl dgst -sha256 -binary | base64 You can download the cert using Chrome or Firefox and then use the openssl command above to get the correct base64 formatted string (e.g.: WcHR5sNo7T3ZDL6b/dLgFFETh4tdkm8SAHXfqKLo9wo=). The same works for the Symfony HTTP Client library that also expects the same format (that is further passed on to cURL) for the peer_fingerprint options entry. The official docs unfortunately do not mention this at all and just replace the formatted value by ‘…’. Oh well.

Unwichtig

Unwichtige Bemerkungen über unwichtiges

Why software matters

Years ago I worked at a consulting company writing software for a German car manufacturer. This project was a multi-year endeavor and had all the bits and pieces in place to become the next generation legacy software that nobody wants to touch. We are talking about a multi-million investment and a 50 person team of engineers, business analysts, architects and managers. We were essentially building a Java EE monolith with a Javascript-backed frontend. Back then, in 2017, Kubernetes was starting to emerge as an interesting option and we experimented around with deployments, but it didn’t affect the architecture decisions too much. We continued to build a humongous monolith and the project was only set to go live after the core functionality had been built. About four years in, the team was finally ready to launch the first alpha to select customers. Yes, you read this right: we proceeded with development for a long period of time without ever testing that the architecture and resulting system could sustain the load. Internally we were doing Scrum, but only occasionally we did a hand over to fit the car manufacturer’s waterfall project planning. I left the consulting company before the system went live. […]

The simplest CI setup ever

This weekend I started to go back and look at a few projects of mine. A few years ago I setup most of those using a mix of Travis CI for building/testing, docker hub for image building/hosting and then somehow wiring this all together with a custom stack. Today this feels outdated and overly complex. As an SRE I’m allergic to complexity. So I spent a few hours checking out the new Github packages and actions. Pricing Both packages and actions come with a unlimited option for open source projects and a nice free tier for private repositories. For actions you get 2000 build minutes per month. For packages 500MB in storage is included. There is more fine print for data transfer but that seems really generous and will mostly suffice for any small to medium size project. (Don’t mine bitcoin in the Github actions runner please :-D) Setup Github has a somewhat unfair advantage here: their stuff is build right into the UI. But that is exactly why its nice. Github has been amazing at creating clear and understandable user interfaces. Compare to the mess that docker hub is, I can only applaud them for a job well done. […]

Chase your Dreams

At age 10, I first got to know computers by a book from my brother called “C++ for game developers” even though I didn’t have the slightest idea of coding then and the book started out pretty steep with all kinds of things, over two-three years I managed to get through to the last page. After I discovered PHP and web programming for me. It was totally contrary to what C++ had to offer: get things done, easily, quickly and without too much complexity. There I developed my first few complex web 2.0 applications. Already then I realized that I’m somewhat not a loser at this whole programming thing. And let me it tell you: it was a lot of fun! So I decided quite early at the age of 14 to go to a technical high school, that would encourage me to dive deeper into computer science. At the HTL I also learned a lot about how a computers electronic circuits work and why things make sense the way they were designed. Back then I already set my goals high to become a professional in this field. Especially my professors in Highschool encouraged me to shoot for the stars. […]

Kubernetes: proxy requests without additional pods

Sometimes you need to provide a legacy access to various downloads or proxy some requests to a different endpoint, that might not be running in your cluster. One can natively redirect such requests with having to add additional deployments / containers to your Kubernetes cluster. There is a special type of Kubernete’s service object that simply points any traffic to that external DNS name. This isn’t really document all too well but eventually you will find enough issues and pointers to frankenstein a solution together. For anybody else looking on how to do this correctly, here is run down with nginx-ingress-controller:0.19.0 that worked for me. First we create a normal ingress object, that allows us to terminate the SSL and look into the path of the HTTP request and decide if this is a request that is relevant to be proxied. Lets quickly take a look at what is going on here. We first configure our Ingress Controller to use nginx and enable automatic provisioning via our cluster ACME/cert-manager. Then we instruct nginx to rewrite the target URL to add the bucket. This way, we can swap buckets without the user facing URL having to change. (Notice to not include […]

The road to Kubernetes: hosting your own cluster on VMs

I finally wanted to setup my own kubernetes cluster as everyone I talk to, said its the hottest shit. I’m using three VMs, hosted at Netcup running the latest Debian 9 Stretch build. I’ve installed most basic tools for me and also already set up docker using this amazing ansible role. Make sure to disable any swap you have configured – kubelet will not start otherwise. The documentation on how to install things is pretty good, but I’ve missed some details, that I banged my head on, so I will copy most snippets over for future reference. Keep in mind, that this might have already changed and is no longer working at the time you read this. First install all needed CLI tools on each of the three hosts: apt-get update && apt-get install -y apt-transport-https curl -s https://packages.cloud.google.com/apt/doc/apt-key.gpg | apt-key add – cat <<EOF >/etc/apt/sources.list.d/kubernetes.list deb http://apt.kubernetes.io/ kubernetes-xenial main EOF apt-get update apt-get install -y kubelet kubeadm kubectl Start the systemd Service for kubelet, our kubernetes manager – also on every node. systemctl enable kubelet && systemctl start kubelet Now the docs are a bit unspecific, but here’s a command to set the correct cgroup on Debian 9. […]

Bachelor thesis published

So in the last four months (October 2015 to January 2016) I had the pleasure to work with the team of students at Texas A&M university. I got to experience a whole different culture of studying and learning – a different approach to tackle the issues of the future.

Compared to German universities, Americans have adopted a class like education even in higher education. While we at the TUM have to aggregate and learn most of the material ourselves, students at A&M tend to get the content and materials prepared in form of text books.

My main goal was to work on my bachelor thesis though, where I looked at a new way to develop web applications using Meteor and Angular to integrate wearables into the web-experience.

You can download the thesis here. It’s also on the website of the “Lehrstuhl für Betriebssysteme”.

Featured on Codementor – 25 PHP Interview Questions

I’m glad to be part of the amazing community at codementor.io. In the past years I’ve been able to talk and help many different people with a range of problems. Often some hints and good advice was enough to point them in the right direction and I really enjoy teaching other people. Codementor recently approached me and asked me if I could contribute some questions from my experience in the last years to their blog post “25 PHP Interview Questions”. I’m happy they found my suggestions useful and featured me in that article! Hope it helps some developers that are just getting to know the world of PHP and all its hiccups. Don’t forget to check out my profile on codementor!

Der Erfolg von Cities: Skylines

Nachdem das Sim City von EA so ein Flopp war, ergab sich eine große Marktlücke. Mit Aktionen wie ‘nur Online spielbar’ und limitierter Spielfläche, die eher sehr mager war, hat man einfach nicht bei den Spielern Punkten können. Zwar wurden viele Dinge verbessert, aber gewisse Trade-Offs gemacht, welche das Spiel nicht zu einem Kassenschlager gemacht hat, obwohl die Vorfreude und Hoffnungen im Vorfeld enorm waren. Colosssal Order, hat in dem Moment die Ohren offen gehalten und mit dem Vorgänger Cities in Motion, der eher in Richtung von Transport Tyccon geht, eine solide Basis für ein Sim City gehabt. Folgende Punkte waren die größten Kritiken am alten Spiel: Online Zwang Limitierte Städte Größen Crappy traffic management City Interaktion ist zwar ein nettes Feature, aber keiner hat lust den Kontext zu wechseln und komplett von null anzufangen. Geld aus der Tasche ziehen mit DLC, der ein paar Wochen nach der Veröffentlichung verfügbar war Das Problem dabei ist folgendes: Gamer die solche Sims bzw. Strategie spiele zocken, sind Gelegenheitsspieler. Es geht nicht darum, immer die neueste Version zu spielen, sondern das Spiel muss gut und flüssig funktionieren. Der Trick mit DLC und Jährlich ‘neuer’ Shooter Edition (Siehe Call of Duty und Konsorten), funktioniert bei […]

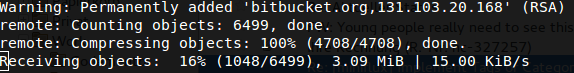

Arrrghhh Bitbucket!

Da schick ich lieber noch die Brieftaube, die bringt mir das schneller. Wird echt Zeit zu Gitlab zu wechseln.